The Problem.

Eligible users were dropping off during onboarding at the access code field, an 8-digit code sent via email that required users to leave the application page to retrieve it. This single point of friction was contributing to roughly a 7% drop-off rate, creating an unnecessary gap between eligibility and enrollment.

With client demand shifting and competitors already removing access codes from their front-end flows, the question became: Does the access code field actually impact application-to-submission conversion?

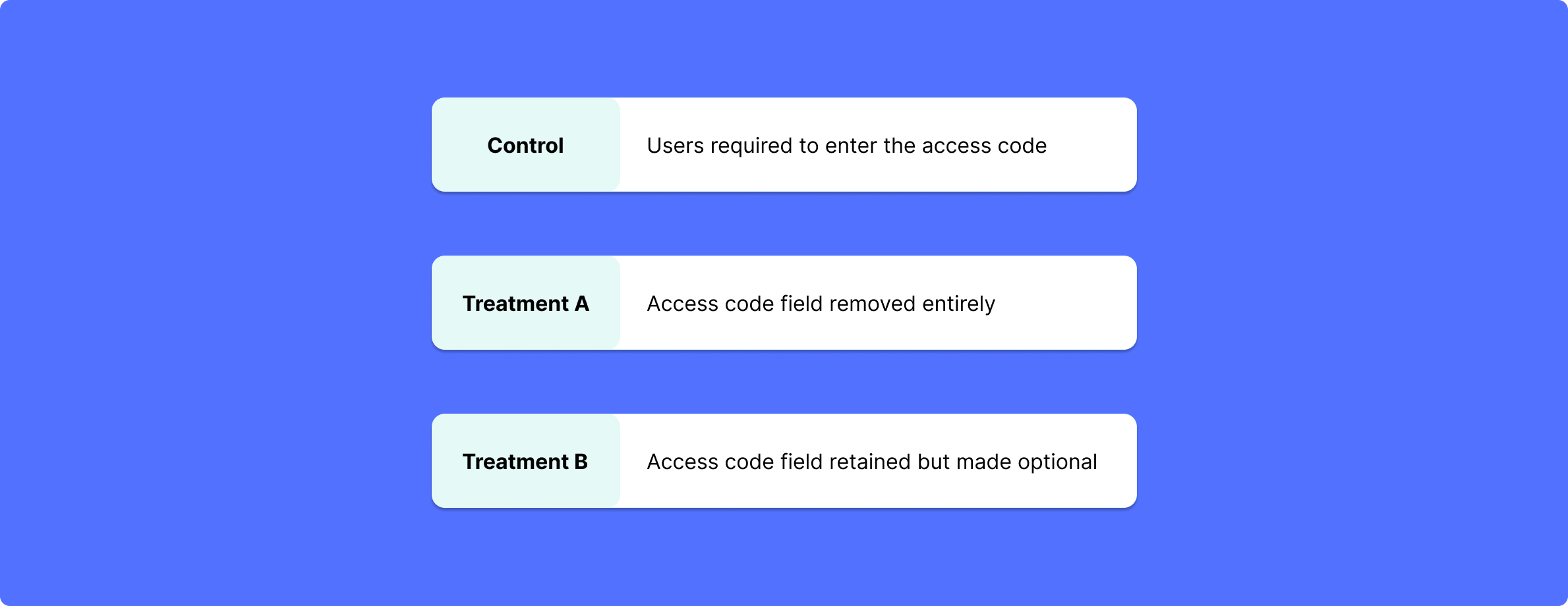

The Test. To find out, I designed and executed an A/B test across 12,000+ users with two treatment arms and one control group:

The Result. Removing the access code field drove a 6.24% lift in application-to-enrollment submissions during the A/B test, validating the friction hypothesis and providing data-backed justification for a roadmap change that had previously been assumed but unproven.

The access code field removal was shipped in October 2025, with continuous improvements to the onboarding flow ongoing.

The Problem. Users were arriving at Omada's onboarding motivated to improve their health, but limited clarity around the program's scope and expectations created confusion, drop-off risk, and misaligned expectations down the line.

Compounding this, Omada hadn't benchmarked its onboarding against competitors in three years, leaving the team without a clear picture of where it stood at a critical conversion moment.

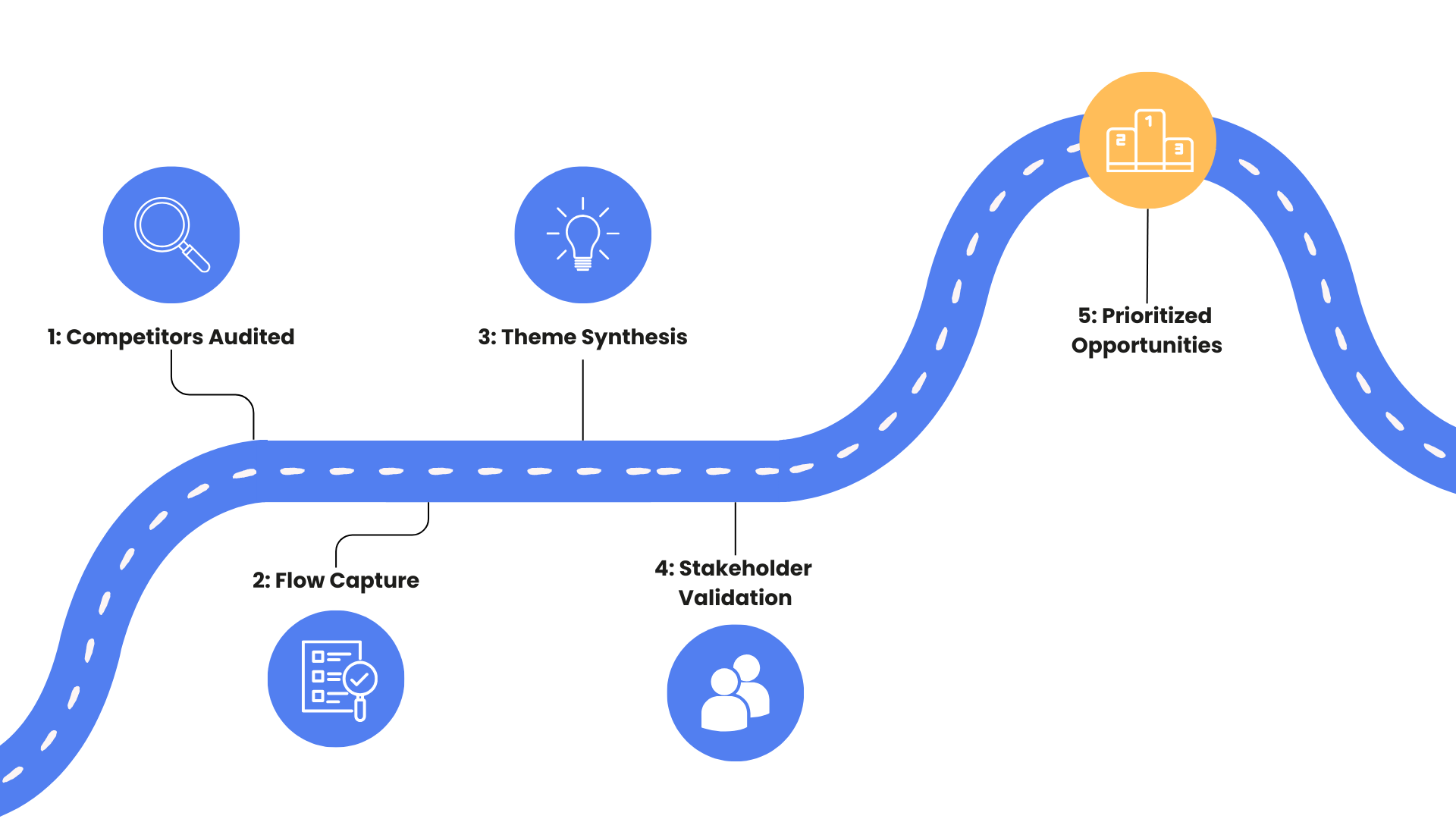

The Process. I conducted a first-hand competitive audit across 12 platforms (direct, adjacent, and out-of-category competitors), going through each sign-up flow as an actual user would. Rather than relying on secondary research, I documented every experience screen by screen, mapping what information was requested, in what order, and how each product communicated value along the way. I also flagged two emerging players, Hello Heart and 9amHealth, as future threats worth monitoring.

Insights were then synthesized into recurring themes, validated with cross-functional stakeholders across marketing, sales, product, and UX, and prioritized based on competitive prevalence, whitespace gaps, and business impact.

The Recommendations. Three strategic opportunities emerged from the analysis:

The Result. The analysis was presented to the Enrollment team and relevant stakeholders at the close of my internship. It delivered a prioritized framework for onboarding improvements, organized by level of effort and potential impact, that the team could use to align cross-functionally and sequence A/B testing. By anchoring every recommendation in competitive evidence and proposing testable variants, the work moved directly from insight to an actionable roadmap.

Impact & Retrospective

What I Learned..

This internship reinforced that the most impactful product decisions aren't always the most complex ones. A single form field was quietly eroding conversion at scale, and a competitive landscape untouched for three years was leaving clear opportunities on the table. What unlocked both was the same thing: pairing strong product instincts with rigorous process, controlled experimentation on one side, firsthand research on the other.

Equally important was learning how to make findings land. Whether presenting A/B test results or competitive recommendations, building credibility with cross-functional stakeholders required grounding every insight in evidence and connecting it clearly to business outcomes. Good analysis doesn't speak for itself, it needs to be shaped for the audience it's meant to move.